Good morning! Hope you’re doing wonderful!

AI this week was about two things: scale and control.

Record money flowed into OpenAI while new models started acting less like chatbots and more like full‑blown operators.

OpenAI wants ChatGPT to be the place you work, code and search.

At the same time, models like GPT‑5.4 and Gemma 4 are built to run workflows, use your computer and plug into everything.

The pattern is clear: AI that does tasks, not just answers questions.

MAIN STORIES

Source: Gemini 3 Pro Image

TL;DR: OpenAI just completed Silicon Valley’s largest funding round ever and is using it to turn ChatGPT into an all‑in‑one AI platform.

Key takeaways

OpenAI secured $122 billion in committed capital, valuing the company around $852 billion and making it one of the most valuable private firms in history.

ChatGPT reportedly has over 900 million weekly active users and more than 50 million paying subscribers, with about $2 billion in monthly revenue.

The company is pitching a ChatGPT “super app” that bundles chat, coding, search and agent workflows into a single interface for consumers and enterprises.

Why it matters

It signals a strategic shift from “API provider” toward being the default operating layer for knowledge work, where many users and companies live inside ChatGPT instead of jumping between separate tools.

TL;DR: Google released Gemma 4, a family of open‑weight models under the Apache 2.0 license that run from phones to data centers and aim to set a new bar for open AI.

Key takeaways

Gemma 4 arrives in four sizes (effective 2B, 4B, 26B MoE and 31B dense), all designed for advanced reasoning, multimodal input and agentic workflows.

For the first time, Google is licensing these models under Apache 2.0, dropping earlier restrictive terms and allowing broad commercial use and modification.

The 31B model already ranks near the top of open model leaderboards while being efficient enough to run on a single high‑end GPU when quantized.

Why it matters

Expect Gemma 4 to show up quickly in local deployments, edge devices and cost‑sensitive production stacks.

TL;DR: OpenAI released GPT‑5.4 with built‑in “computer‑use” skills, massive context and lower error rates, pushing AI from assistant to hands‑on operator.

Key takeaways

GPT‑5.4 can control desktops and browsers directly, clicking, typing and navigating apps to complete multi‑step workflows across software tools.

The model hits up to 1 million tokens of context and posts record scores on OS operations and web interaction benchmarks like OSWorld‑Verified and WebArena Verified.

OpenAI reports roughly one‑third fewer factual errors compared with previous versions and positions GPT‑5.4 as its flagship for serious professional work.

Why it matters

If it works reliably, GPT‑5.4 will sit behind many agent products that operate spreadsheets, documents, emails and internal tools without humans manually driving every click.

For teams already experimenting with agents, this release will likely push more real workload automation, not just demos.

AI CULTURE

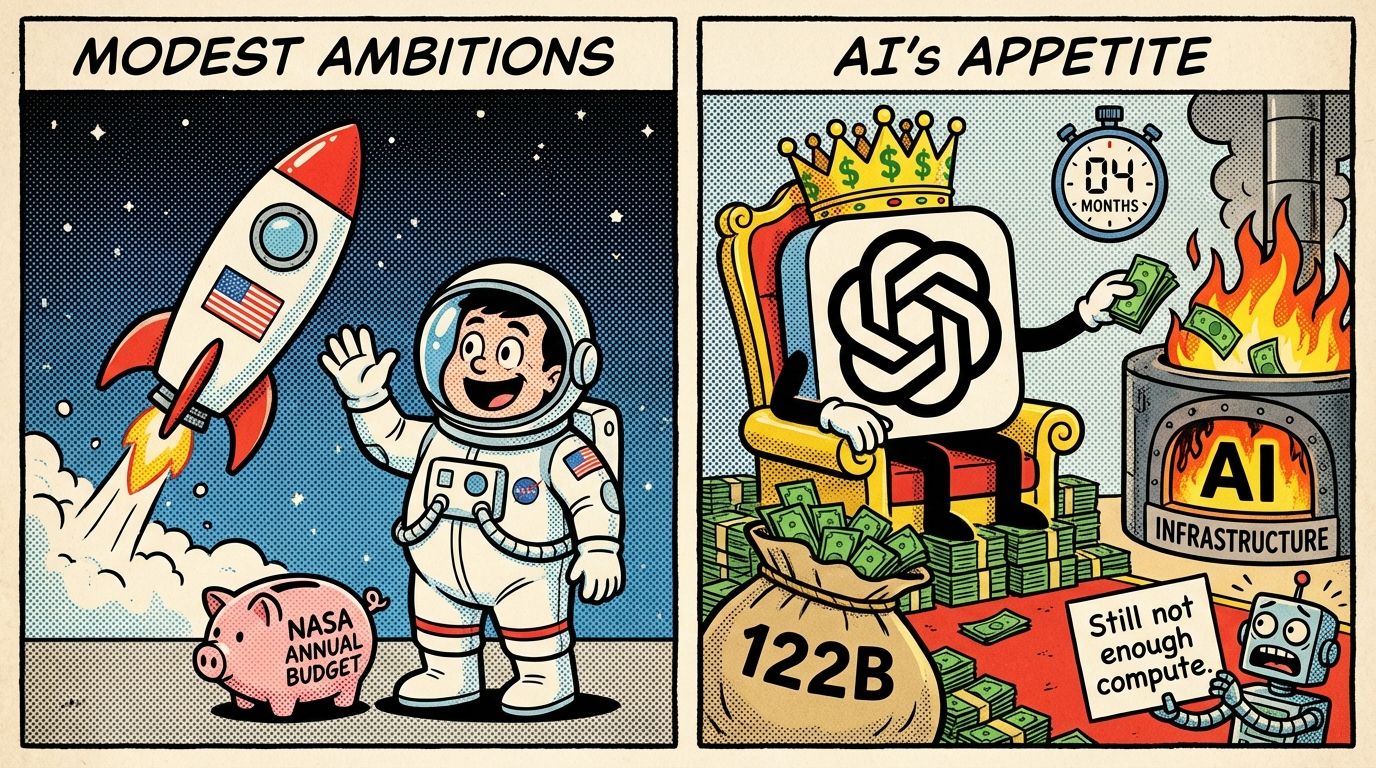

Meme of the week:

Can OpenAI spend NASA’s yearly budget in four months? 🤔

Quick Hits 🎯

Microsoft expands Copilot and introduces Cowork - New multi‑model workflows and a Cowork agent that critiques answers and runs longer tasks inside Microsoft 365.

Salesforce turns Slackbot into an autonomous assistant - Slackbot now chains skills, talks to external tools and automates CRM‑heavy workflows, not just replies with summaries.

Microsoft rolls out its own MAI text, voice and image models - Three in‑house models land in Foundry, signaling Microsoft wants less dependence on OpenAI over time.

OpenAI retires older ChatGPT models including GPT‑4o - Legacy options disappear after April 3 as the company simplifies its lineup around GPT‑5.4 variants.

Oracle reportedly plans tens of thousands of layoffs to fund AI - Up to 20,000–30,000 jobs may be cut to redirect 8–10 billion dollars into AI infrastructure spending.

Sign‑off

That is it for this week in AI.

Same time next week. Bring coffee 🍵